Summary

In SQL Server 2016, Microsoft extended SQL Server engine capabilities to be able to execute an external code written in R language. Sql Server 2017 added Python language to the mix and 2019 integrated Java. The new functionality is a part of Sql Server’s Machine Learning Services.

This blog explains how to set up SQL Server 2019 so we can use ML services and the Python programming language.

Prepare the environment for Python

The first step is to add Machine Learning Service Extensions / Python to our SQL Server environment.

The next step is to allow Sql Server to execute external scripts by turning on the external scripts enabled user option on the server level

sp_configure

@configname = 'show advanced options'

,@configvalue = 1

GO

RECONFIGURE WITH OVERRIDE;

GO

sp_configure

@configname = 'external scripts enabled'

,@configvalue = 1

GO

RECONFIGURE WITH OVERRIDE;

GO

At this stage, we should be ready to go.

Let’s explore how the SQL Server engine relates to the above-mentioned ML(Machine Learning) services. The two components are “bound” by the Extensibility framework.

Launchpad

Launchpad service, one of several services in Sql Server, has a job to manage and execute external scripts – the scripts written in Python language. It instantiates an external script session as a separate, satellite process.

Figure 2, Extensibility framework

The purpose of the extensibility framework is to provide an interface between the SQL Server engine and Python (and other languages). Figure 2 shows how different components interact and demonstrates security concepts.

From the super high-level point of view, the process goes like this:

- Our application calls system stored proc. sys.sp_execute_external_script. The proc is designed to encapsulate code written in one of the external languages, Python in this case. The stored proc executes within the SQL Server engine – Sqlservr.exe. The engine knows that the code it needs to run contains a program written in Python and therefore starts the Launchpad.exe process. Sqlservr.exe uses Named Pipes protocol(often used for the communication between processes on the same machine) to pass the code to be executed.

- Launchpad service now call initiates the DLL specific to the language that needs to be run. In this case, it’s PythonLauncher.dll.

- PythonLauncher.dll now runs Python.exe/dll which compiles the program to bytecode and executes it.

- Python.exe then talks to yet another program, BxlServer.exe. Bxl stands for Binary Exchange Language. This program coordinates with the Python runtime to manage exchanges of data and storage of the working results.

- sqlsatellite.dll communicates with SQL Server engine over TCP/IP protocol and ODBC interface. It retrieves input data sets*, sends back result sets and Python’s standard I/O streams – stdout and stderr.

- BxlServer.exe communicates with Python.exe passing messages back and forth from Sql Server through sqlsatellite.dll.

- Sql Server gets the result set, closes the related tasks and processes, and sends the results back to the client e.g SSMS

Security

Security architecture for the extensibility framework can be observed in Figure 2.

- The client uses Windows/SQL login to establish a connection with SQL Server and execute Python code encapsulated in sys.sp_execute_external_script stored proc.

- Launchpad service runs as a separate process under its own account – NT Service\MSSQLLaunchpad$<instanceName>. The Windows account has all the necessary permissions to run external scripts and is a member of the SQLRUserGroup2019 Windows group.

How to interact with the file system(Import/Export csv, xlsx, ..) or to access resources outside the Server env will be explained later in this blog - Launchpad, when invokes the appropriate runtime environment, in our case, the PythonLauncher.dll, initiates an AppContainer and assigns the process to it. By default, the Launcher service creates 21 containers AppContainer00 – AppContainer20. The number of containers can be changed through Sql Server Configuration Manager/Sql Server Launchpad/Advanced/Security Contexts Count property.

AppContainer is an internal mechanism that provides an isolated execution environment for the applications assigned to it. An application running in an AppContainer can only access resources specifically granted to it. This approach prevents the application from influencing, or being influenced by, other application processes - Launchpad maps the User(used to make a connection) to an AppContainer that now defines credentials unique to user/application pair. This also means that the application, in this case, launchpad.exe cannot impersonate db Users and gain more access than it should have.

So, in a nutshell, a Client connects to Sql Server using Sql/Win login mapped to db user. The user runs sys.sp_execute_external_script with the Python code. Sql Server runs launchpad.exe which runs under it’s own win account. The launcher then initiate one of twenty AppContainers

Firewall rules – how to access data from the Internet

As mentioned before, there are 20 separate application containers – AppContainer00 – AppContainer20 These objects restrict access to the applications assigned to them, in this case launchpad.exe.

Figure 3, Windows Firewall outbound rules related to the AppContainers

If we disable these rules, our Python code, encapsulated in sys.sp_execute_external_script, will be able to communicate with the “outside world” and e.g get up-to-date FX (Foreign Exchange) rates, BitCoin price for different currencies etc. – see. forex-python module. We can then e.g describe the result and send back to the Client/top level procedure. It is also possible to “join” the data to a tSQL query result, within the same query batch, and then to send it back to the caller – amazing stuff 🙂

EXEC sys.sp_execute_external_script

@language = N'Python' --specify language to use

,@script = N'

from pandas_datareader import data

start_date = "2021-11-20"

end_date = "2021-12-01"

UsToAus = data.DataReader("DEXUSAL", "fred",start=start_date, end=end_date).reset_index()

print(UsToAus)

'

,@output_data_1_name =N'UsToAus'

WITH RESULT SETS (([Date] DATE,[US to AUS] FLOAT) )

In the example above, the code connects to FRED (Federal Reserve Bank of St. Louis, Missouri, USA) and pulls the exchange rates between the USD and AUD for a given timeframe.

File system accessibility – how to import/export result

Another cool thing that you can do with Python is to easily export results to disk e.g save a query result (report) in a form of an Excel file, to a location where it can be picked up(pulled) by the authorised users. It is also possible to read e.g a lookup table – extended customer information, states, employee information etc, from disk and join it with the tSQL query result-set, again within the same sp call.

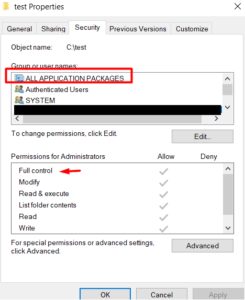

To enable interaction with the file system we need to allow “Sql Server’s” Python to access specific folders. By default, Python can only access its working directory and its subdirectories, in this case c:\Program Files\Microsoft SQL Server\MSSQL15.SQL2019\PYTHON_SERVICES\. As mentioned before, the Launchpad.exe service runs under its own account. It starts python.exe runtime environment in a process, again, under the same account. Finally it instantiates a new AppContainer object to contain its activities. This container now acts as a windows app. with very limited access. Windows apps can access only those resources (files, folders, registry keys, and DCOM interfaces) to which they have been explicitly granted access (“ALL APPLICATION PACKAGES”).

Figure 4, ALL APPLICATION PACKAGES access rights

Now, we can use Pivoting with Python script from another blog to export results to disk.

Figure 5, export results to disk

Memory allocations

It is important to understand how ML Services fits into Sql Server memory allocation scheme to be able to access the possible impact it can have on the database server performance.

As we can see from Figure 2, Python code runs outside of the processes managed by Sql Server. This means that it doesn’t use memory allocated to Sql Server.

The query below shows that, by default, the maximum memory that ML services can use, outside the memory allocated to Sql Server, is 20 % e.g Total RAM 256GB, Sql Server allocated mem = 200GB, ML can use up to 56 * 0.2 = ~11GB

SELECT * FROM sys.resource_governor_external_resource_pools WHERE [name] = N'default'

Figure 6, External resource pools

We can increase the max_memory_percent to e.g 50% by altering the “default” external resource pool

ALTER EXTERNAL RESOURCE POOL "default" WITH (max_memory_percent = 50); GO ALTER RESOURCE GOVERNOR RECONFIGURE; GO

File locations and configurations

- launchpad.exe: ..\MSSQL15.SQL2019\MSSQL\Binn\Launchpad.exe

- pythonlauncher.log ..\MSSQL15.SQL2019\MSSQL\Log\ExtensibilityLog\pythonlauncher.log

- Config file (Python) : ..\MSSQL15.SQL2019\MSSQL\Binn\pythonlauncher.confg

Python home directory, encoding standard, env patrh, working directory – this is where python stores intermediate results during the calculations, etc.

Python version

SQL Server 2019 supports Python 3.7.1. The package comes with the Anaconda 4.6.8 distribution. The language runtimes and various packages e.g pandas, are installed under the root directory:

c:\Program Files\Microsoft SQL Server\MSSQL15.SQL2019\PYTHON_SERVICES

To check Python version use command prompt:

c:\Program Files\Microsoft SQL Server\MSSQL15.SQL2019\PYTHON_SERVICES>python --version

To check Anaconda distribution version:

c:\Program Files\Microsoft SQL Server\MSSQL15.SQL2019\PYTHON_SERVICES\Scripts>conda.exe --version

But wait, there is more 🙂

With SQL Server 2019 CU3+ (latest CU) we can use the Language Extension feature to “add” different versions of Python to the mix. In fact, we can add any language extension.

For example, I use Python – version 3.9.7 that is installed as a part of the Anaconda 4.10.3 distribution, for work, not related to Sql Server platform. Wouldn’t it be cool if it was possible to use that installation in SQL Server?

Note: There are two packages developed by Microsoft, specifically for out-of-the-box Python installation: revoscalepy and microsoftml. These packages are not available in the distribution mentioned above .

Language extensions is a set of dll’s written in C++ that act as a bridge between Sql Server and the external runtime. This technology allows us to use relational data(query result sets) in the external code. In a nutshell, the current Python language extension program is an open source code available here. However, the code currently supports only versions 3.7.x. So, to be able to use Python 3.9 as the external code, we need to build our own “bridge”.

Here is an excellent article on how to do it. It looks complex , but once you start you’ll not be able to stop – it’s just so much fun.

Figure 7, Python 3.9 Language extension for SQL Server

Figure 7, Python 3.9 Language extension for SQL Server

In the next blog, I’ll present various dev tools we can use to construct our Python code in Sql Server. 🙂

Thanks for reading.

Dean Mincic

Figure 1, ML services

Figure 1, ML services

Figure 3, the total number of pages per index

Figure 3, the total number of pages per index

Figure 3, The tipping point

Figure 3, The tipping point Figure 4, The exact Tipping point number of rows

Figure 4, The exact Tipping point number of rows Figure 5, Query plan change

Figure 5, Query plan change